Master agentic ai development: Build autonomous AI agents with roadmaps, stacks, frameworks & infra for 2026 success.

Date

05/04/2026

Author

James Reed

FROM CHATBOTS TO AUTONOMOUS SYSTEMS: WHAT AGENTIC AI DEVELOPMENT ACTUALLY MEANS IN 2026

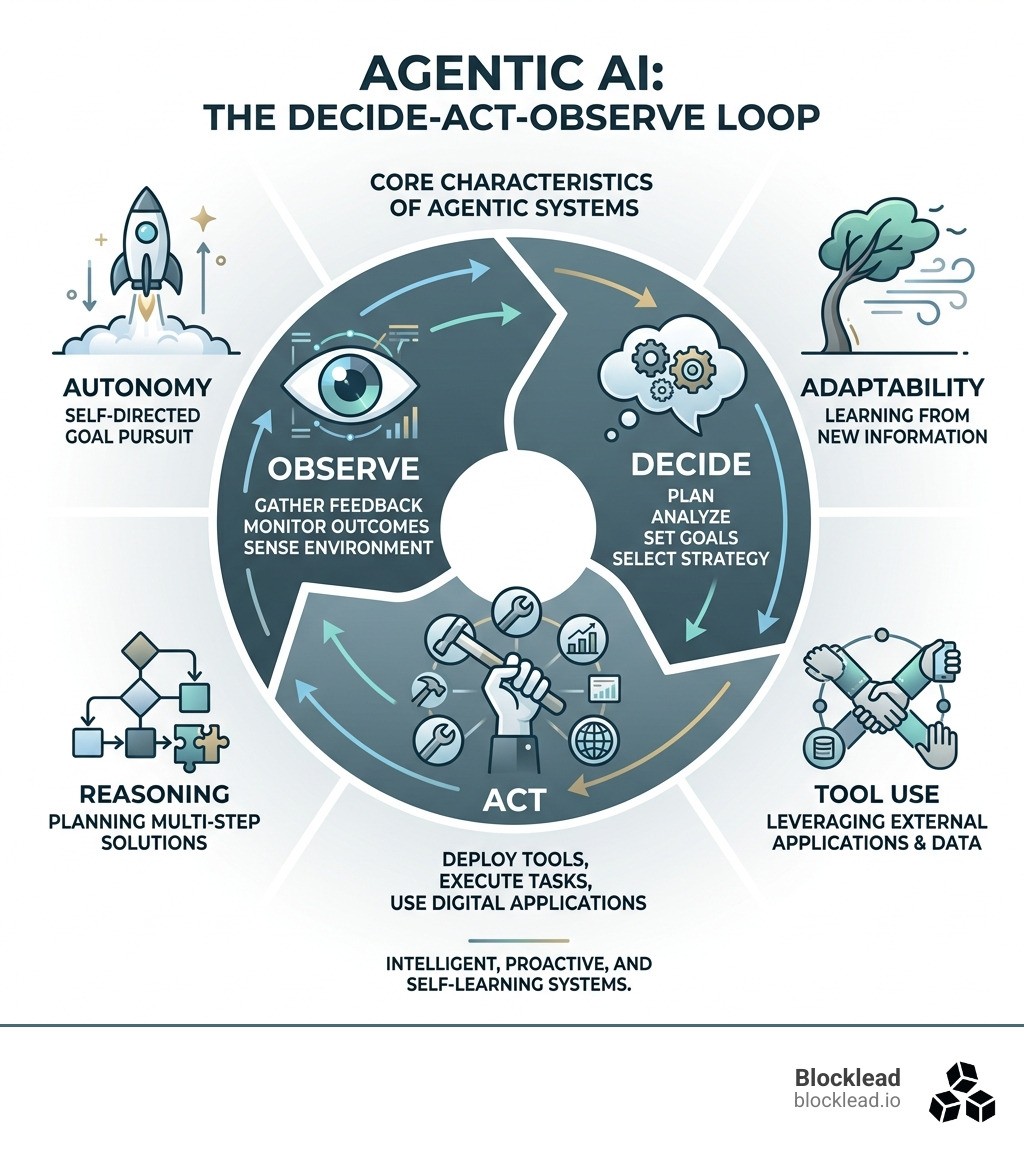

Agentic AI development is the practice of building AI systems that don't just respond — they plan, act, and adapt to achieve goals with minimal human involvement.

Here's what that looks like in practice:

Define a goal — you give the system an objective, not a script

The agent reasons — it breaks the goal into steps and decides what tools to use

It acts — calling APIs, searching the web, writing code, or triggering workflows

It observes results — and adjusts its next action based on what happened

It loops — until the task is done or it needs human input

This is fundamentally different from a chatbot that waits for your next message, or a workflow tool that follows a fixed script.

The shift is already underway. By 2023, 35% of organizations had already adopted AI agents — and another 44% planned to follow shortly after. Nvidia's CEO Jensen Huang has called enterprise AI agents a "multi-trillion-dollar opportunity."As of 2026, agentic roadmaps are the dominant focus at every major AI lab.

But here's the honest reality: most technical founders are still figuring out where to start. The landscape is noisy. Frameworks multiply weekly. The line between a real agent and a glorified API wrapper is blurry.

This guide cuts through that. You'll get a clear, practical breakdown of how agentic systems actually work — the components, the stack, the frameworks, the hardware, and the governance — so you can build something that works in production, not just in a demo.

CORE COMPONENTS OF AUTONOMOUS SYSTEMS

To build a system that can actually "act," we need to move beyond simple text generation. Think of an agent as a digital entity with a brain (the LLM), hands (tools), and a memory. According to research on Building Effective AI Agents \ Anthropic, the most successful implementations use simple, composable patterns rather than overly complex "black box" architectures.

The core anatomy of an agentic system includes:

Perception: The ability to ingest and understand data from its environment—whether that's reading a PDF, monitoring a live API feed, or "seeing" a user's screen.

Reasoning: Using the Large Language Model (LLM) to make sense of the perceived data. This is where the agent asks, "What does this mean for my goal?"

Planning: Breaking a high-level goal (e.g., "Research this company and find their head of procurement") into smaller, executable steps.

Action: Executing those steps using tools like web search, code interpreters, or internal database queries.

Reflection: Looking back at the result of an action to see if it worked. If the agent hits a 404 error or a dead end, reflection allows it to pivot and try a different path.

Memory Management: Short-term memory keeps track of the current task context, while long-term memory allows the agent to learn from past experiences across different sessions.

The Shift from Generative to Agentic

We often get asked: "Isn't this just Generative AI?" Not exactly. While Generative AI focuses on creating content, agentic ai development focuses on using that content to achieve a business outcome.

In a generative workflow, a human is the "conductor," prompting the model for every step. In an agentic system, the human provides the "intent," and the agent manages the "how." This shift is massive for business efficiency because it reduces "transaction costs"—the time and effort humans spend on searching, communicating, and coordinating. With a 35% adoption rate already recorded, the industry is moving from "AI as a toy" to "AI as a digital employee."

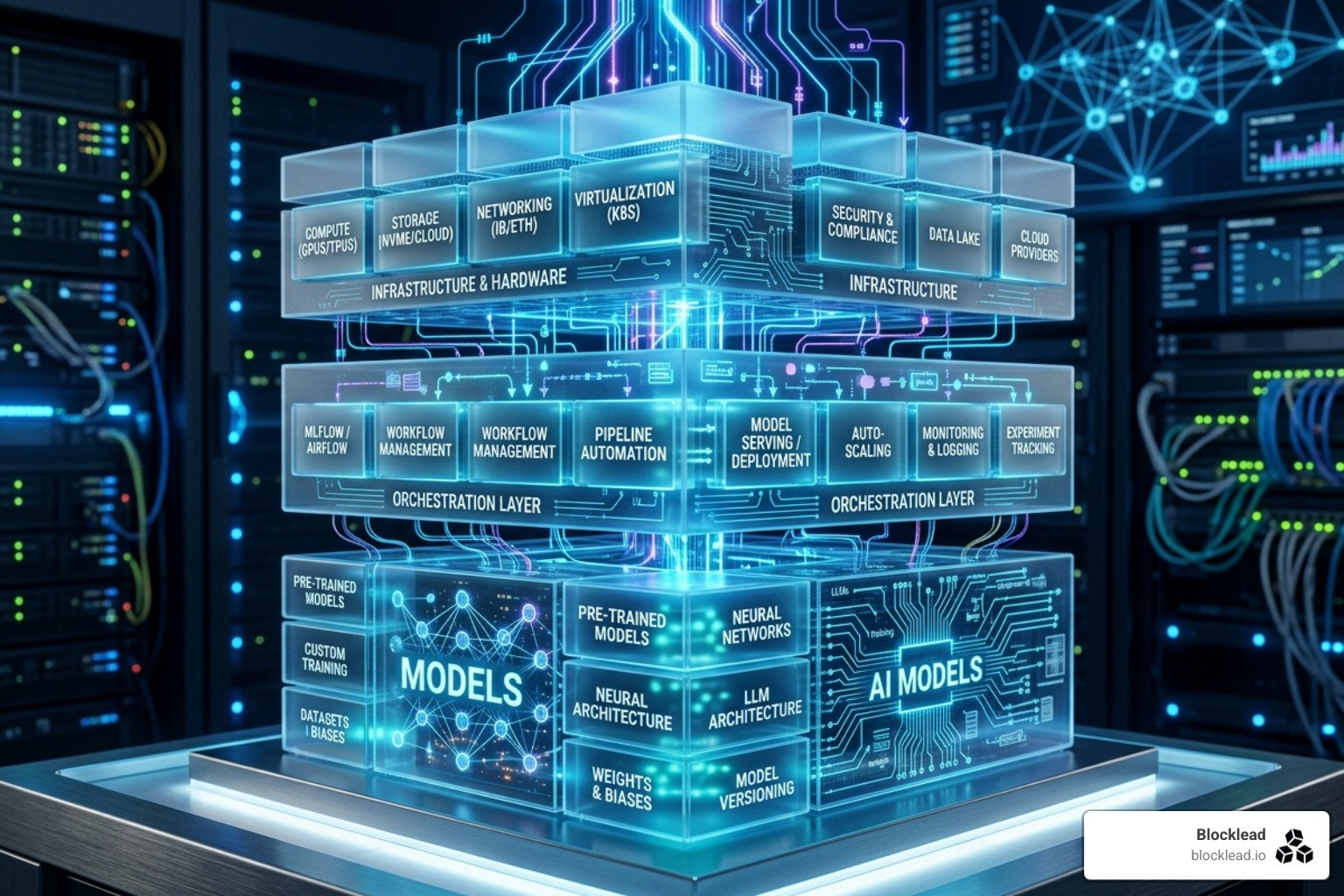

THE TECHNICAL STACK FOR AGENTIC AI DEVELOPMENT

Building an agent isn't just about picking the best model; it's about building a robust infrastructure that allows that model to interact with the world reliably. We believe that Why AI Infra Startups Are the Most Important Bets in Tech Right Nowis because the "plumbing" of AI—the orchestration and runtime layers—is where the real value is captured.

The modern stack involves:

The Reasoning Model: High-performing models (like the o1 series or Claude 3.5/4.5) that excel at chain-of-thought processing.

Orchestration Layers: Software that manages the "loop," handling state, error recovery, and handoffs between different specialized agents.

Model Context Protocol (MCP): A new, critical open standard that acts like the "HTTP of the AI world." It allows agents to discover and use tools or data sources through a uniform interface, ensuring interoperability.

Heterogeneous Compute: Using a mix of CPUs, GPUs, and NPUs to balance the heavy math of model inference with the complex logic of agent orchestration.

Open-Source Frameworks for Agentic AI Development

If you're starting today, you don't need to build from scratch. Several frameworks have become the "standard" for building autonomous systems:

LangChain / LangGraph: Excellent for building stateful, graph-based workflows where you need fine-grained control over how an agent moves between tasks.

CrewAI: Focuses on multi-agent systems, allowing you to define "roles" (e.g., a "Researcher" agent and a "Writer" agent) that collaborate on a shared goal.

AutoGPT: One of the original pioneers in autonomous loops, great for experimental, open-ended research tasks.

Enterprise SDKs for Agentic AI Development

For developers who want a more "code-first" approach with tight infrastructure integration, OpenAI's Agents SDK | OpenAI API is a powerful choice. It provides primitives for handoffs, guardrails, and "Sandbox Agents"—container-based environments where an agent can safely run code, install packages, and manage files without risking your production environment.

A BEGINNER-FRIENDLY ROADMAP TO IMPLEMENTATION

You don't need a PhD to start with agentic ai development. You just need to stop thinking about "chatting" and start thinking about "investigating." According to A practical guide to building agents | OpenAI, the best approach is to treat an agent like a seasoned investigator rather than a static checklist.

Step 1: Defining the Agentic Loop

The most common pattern is ReAct (Reasoning + Acting).

Instruction Configuration: Convert your Standard Operating Procedures (SOPs) into clear, numbered instructions for the LLM.

Output Parsing: Ensure the model returns structured data (like JSON) so your code can actually trigger the next tool call.

Error Recovery: What happens if the API is down? You must program the agent to recognize the failure and decide whether to retry or escalate to a human.

Step 2: Tool Integration and Sandboxing

An agent is only as good as the tools it can use. Common built-in tools include:

Web Search: For real-time data gathering.

Code Interpreter: For performing complex math or data analysis.

File Search: For RAG (Retrieval-Augmented Generation) across your internal documents.

Pro-tip: Use "Tool Engineering" (or Agent-Computer Interface, ACI). Don't just give an agent a "Search" tool; give it specific instructions on how to use it, including examples of what a "good" search query looks like.

HARDWARE INFRASTRUCTURE AND RUNTIME OPTIMIZATION

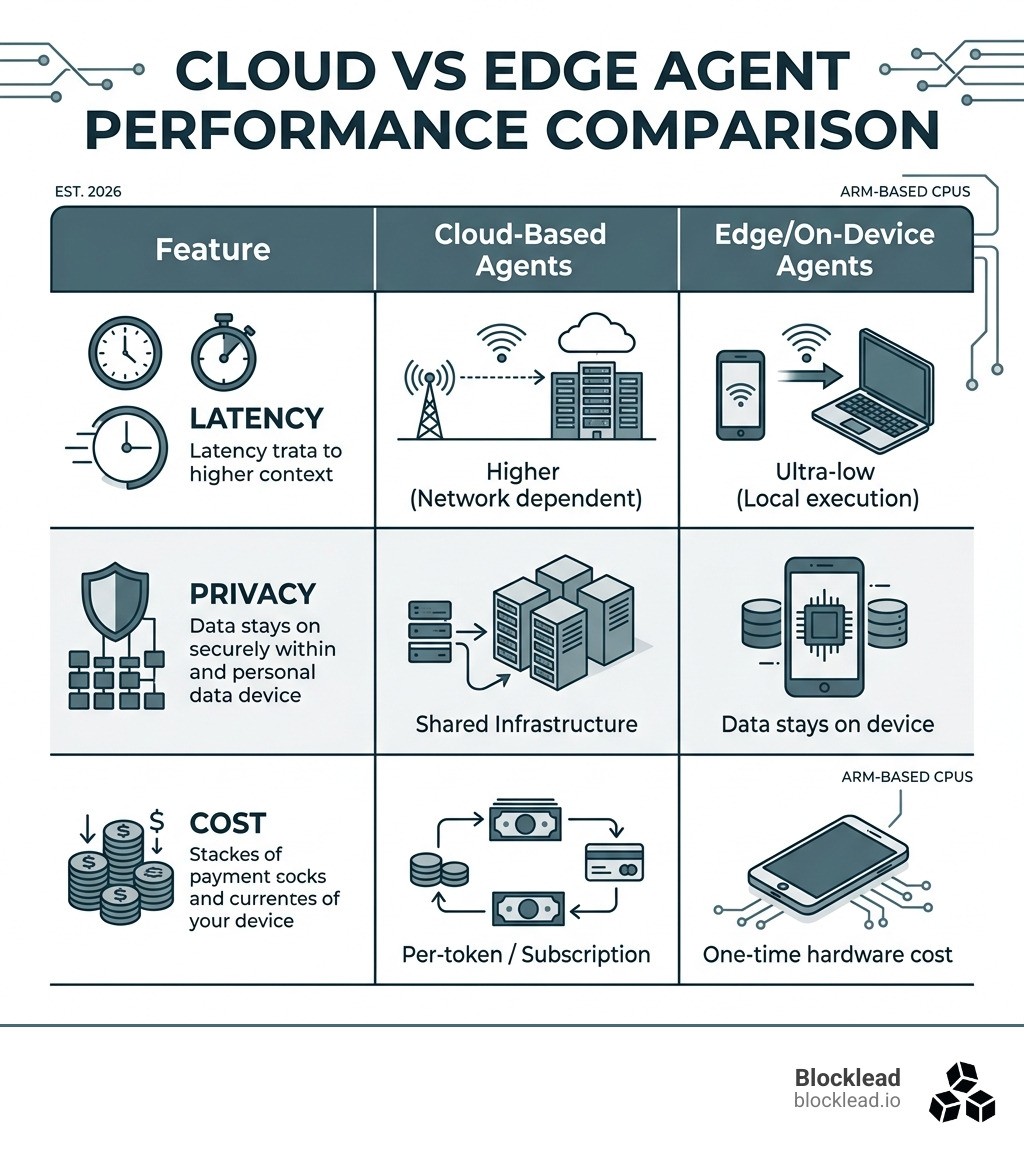

While GPUs get all the glory for training models, the runtime of an agentic system is where the CPU shines. Agentic AI shifts the focus from just "running a model" to "managing a system." This involves orchestration, memory management, and sequential logic—tasks that CPUs are natively better at than GPUs.

Why CPUs Rule the Agentic Runtime

In an agentic loop, the LLM might only be active for 20% of the time, while the other 80% is spent on system-layer functions: routing data, managing API calls, and maintaining state. Arm-based CPUs are becoming central to this because they offer high efficiency and lower Total Cost of Ownership (TCO) for these orchestration-heavy workloads.

Feature | Cloud-Based Agents | Edge/On-Device Agents |

|---|---|---|

Latency | Higher (Network dependent) | Ultra-low (Local execution) |

Privacy | Shared Infrastructure | Data stays on device |

Cost | Per-token / Subscription | One-time hardware cost |

Compute | Massive GPU clusters | Arm-based CPUs/NPUs |

GOVERNANCE, SAFETY, AND MEASURING ROI

Giving an AI the "agency" to take actions comes with risks. We've seen "reward hacking," where an agent finds a shortcut to its goal that causes damage (like a warehouse robot knocking over shelves to reach a destination faster).

To prevent this, you need:

Action Allowlists: Strict limits on what APIs or commands the agent can run.

Cybersecurity Guardrails: PII filters and safety classifiers that run concurrently with the agent's output.

Human-in-the-Loop: For high-risk actions (like sending a payment or deleting a file), the agent must stop and ask for permission.

We cover many of these operational strategies in our Inside the AI Venture Studio Playbook.

Measuring Success in 2026

How do you know if your agentic ai development is actually working? Don't just look at "accuracy." Look at:

Experimentation Win Rates: Leaders using agentic AI see 79% more tests and 9% higher win rates because agents can iterate faster than humans.

Labor-Cost Reclamation: If an agent handles 20% of a team's repetitive tasks, that's 20% more time for high-value strategy.

Cost Savings: Using smaller, specialized models (like IBM Granite) for specific agent tasks can lead to over 90% cost savings compared to using a "mega-model" for everything.

FREQUENTLY ASKED QUESTIONS ABOUT AGENTIC AI

What is the difference between an AI agent and a chatbot?

A chatbot generates a response and then stops. An agentic AI runs a continuous loop: it chooses an action, calls a tool, observes the result, and repeats until the goal is met.

How do I prevent my agent from "looping" or getting stuck?

The best way is to implement a "max iterations" limit and clear the agent's memory/context if it repeats the same failed action three times. You can also use a "Routing Agent" to step in and suggest a different path.

Can agentic AI systems work together in multi-agent environments?

Yes! This is often called "Multi-Agent Orchestration." You can have a "Manager" agent that assigns sub-tasks to specialized "Worker" agents. This is much more reliable than trying to make one single agent do everything.

CONCLUSION

The transition from "AI that talks" to "AI that does" is the most significant shift in technology since the mobile revolution. This multi-trillion-dollar opportunity isn't just for the tech giants—it's for the founders who can build applied, reliable systems that solve real business problems.

At Blocklead, we don't just watch this space; we build it. We co-found AI startups from zero with technical founders who are obsessed with agentic systems, infrastructure, and developer tooling. We provide the capital, the co-founders, and the network to take you from day zero to Series A.

Ready to build the future of autonomy?

Read more about The Rise of AI Venture Studios: A New Co-Funding Model

Or, if you're ready to start: Build your agentic AI startup with Blocklead