Discover top ai infra startups powering 2026 AI: GPUs, inference neoclouds, energy solutions & agentic systems.

Date

05/04/2026

Author

James Reed

WHY AI INFRA STARTUPS ARE THE MOST IMPORTANT BETS IN TECH RIGHT NOW

AI infra startups are companies building the foundational technology layers — compute, storage, data pipelines, orchestration, and tooling — that make it possible to develop, deploy, and scale AI applications in the real world.

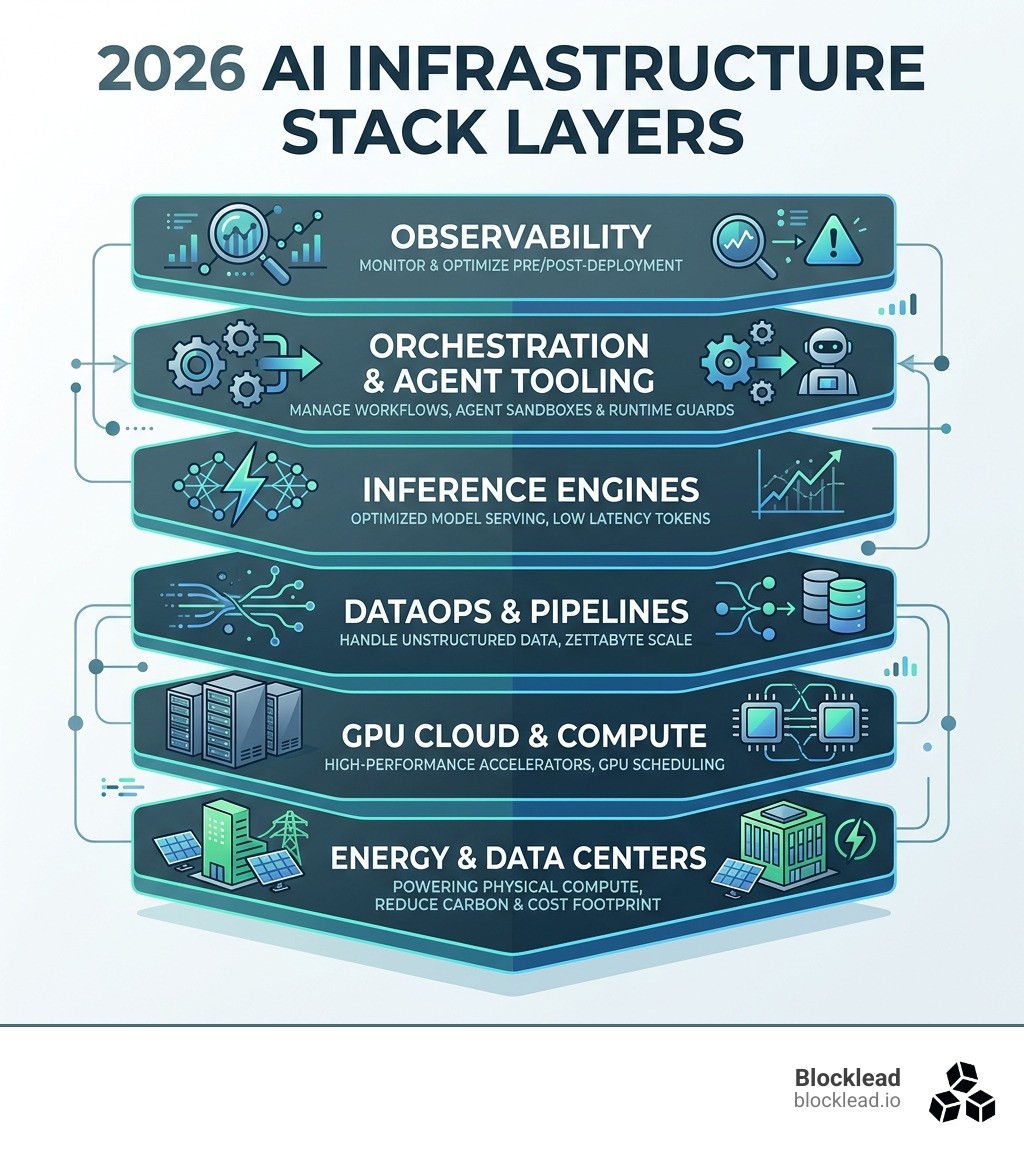

Here are the top categories to know in 2026:

Category | What It Does | Example Problem Solved |

|---|---|---|

GPU Cloud & Compute | Provides access to high-performance accelerators | Eliminating GPU waste and over-provisioning |

Inference Engines | Optimizes model serving at scale | Low-latency, high-throughput token generation |

DataOps & Pipelines | Manages data movement and transformation | Handling unstructured data at zettabyte scale |

Agent Tooling | Sandboxes, memory, and runtime guards for AI agents | Keeping autonomous agents safe in production |

Observability | Monitors models pre- and post-deployment | Catching drift, bias, and failures early |

Energy & Data Centers | Powers the physical compute layer | Reducing the carbon and cost footprint of training |

Most people talk about AI applications. Fewer people talk about what actually makes those applications run.

That's a mistake.

Right now, only 5–10% of businesses have generative AI running in production. The gap between a promising demo and a reliable, scalable product is almost entirely an infrastructure problem.

The numbers back this up. The AI infrastructure market is on track to grow from $23.5 billion in 2021 to over $309 billion by 2031. Cloud infrastructure spending already hit $336 billion in 2024, with roughly half going directly to AI workloads. And in 2025 alone, AI infrastructure companies raised $84 billion across just 10 mega-rounds.

But here's what's interesting for early-stage founders: the biggest opportunities aren't always at the top. They're in the unsexy, hard-to-solve layers — GPU scheduling, data movement, agent sandboxing, context compression — where a small team with deep technical insight can build something genuinely irreplaceable.

As the old Mark Twain logic goes: when everyone is rushing to find gold, it's a good time to be selling picks and shovels.

This guide breaks down exactly what the AI infrastructure stack looks like in 2026, which problems are still wide open, and how technical founders can build in this space without getting buried by complexity before they even ship.

THE 2026 LANDSCAPE FOR AI INFRA STARTUPS

The market for ai infra startups is no longer just a subset of the cloud; it is the cloud. As of May 2026, we are seeing a massive divergence between general-purpose IT and the specialized stacks required for superintelligence. With the global market projected to reach $309 billion by 2031, the "picks and shovels" are becoming more valuable than the gold mines themselves.

We've seen a surge in investment momentum, particularly in the San Francisco Bay Area and Europe. According to 10 Fastest Growing AI Infrastructure Companies and Startups, the industry is being reshaped by massive capital inflows and a shift toward "sovereign AI"—where nations and enterprises demand localized, secure infrastructure that doesn't rely on a single global provider.

The 2025 and 2026 batches from Y Combinator have been a bellwether for this shift. Out of the hundreds of companies funded, a significant portion are AI Startups funded by Y Combinator that focus exclusively on infrastructure bottlenecks like GPU over-provisioning and agentic workflows. Compute scarcity remains the primary driver of innovation; when you can't get more chips, you build better software to make the chips you have work harder.

Why Infrastructure is the Engine of Superintelligence

If AI is the brain, infrastructure is the nervous system and the metabolic engine. You can't have superintelligence if your data packets are stuck in traffic or your GPUs are idling. Startups like Nscale are proving that the "engine of superintelligence" requires a full-stack approach—integrating sovereign data centers with renewable power and bare-metal performance.

The 2026 trend is moving away from abstracted, "black box" cloud services toward transparent, high-performance environments. Founders are realizing that to win, they need to offer more than just a virtual machine; they need to provide optimized environments where every cycle of the H100 or B200 is accounted for.

The Shift from Training to Inference Neoclouds

In 2024, everyone was obsessed with training. In 2026, the money is in inference. As models move into production, the demand for low-latency, high-throughput token generation has birthed a new category: the Inference Neocloud.

Companies like Evergrid AI are leading this charge by focusing on single-tenant AI clusters that prioritize stable capacity and predictable cost profiles. Unlike traditional hyperscalers, these neoclouds are built for the "4th Industrial Revolution," where edge computing and real-time reasoning are the standard, not the exception.

SOLVING THE "SYNCHRONIZED CAPACITY" PROBLEM

One of the biggest headaches we see founders face is "synchronized capacity"—the nightmare of trying to coordinate power, hardware, and software at the same time. It's not just about having a GPU; it's about having the right networking and storage to ensure that GPU isn't waiting on data.

Modal has become a favorite for developers by offering high-performance infrastructure that feels local. They've solved the "cold start" problem, allowing containers to scale instantly. This is critical because, in the AI world, a five-minute wait for a server to spin up is an eternity.

Feature | Bare-Metal OS (e.g., Fluidstack) | Traditional Cloud Virtualization |

|---|---|---|

Provisioning Speed | Sub-2 minutes | 5-15 minutes |

Performance Overhead | Near Zero | 5-10% (Hypervisor tax) |

Isolation | Physical/Hardware Level | Software/Virtual Level |

Control | Total ownership of kernel/drivers | Restricted by provider |

By utilizing resource prediction and automated failure detection, ai infra startups are now achieving 99.98% uptime, a necessity for enterprise-grade applications.

Breaking the CUDA Moat with AI Infra Startups

For years, NVIDIA's CUDA was the "moat" that kept everyone locked in. But in 2026, we are seeing the rise of hardware-agnostic layers. Startups like Fluidstack are building "Infrastructure for Abundant Intelligence" by creating bare-metal operating systems that can run across different GPU architectures.

This democratization allows teams to tap into multi-cloud capacity pools, using whatever hardware is available—whether it's NVIDIA, AMD, or specialized ASICs—without rewriting their entire codebase. Sub-second cold starts and elastic scaling are no longer luxuries; they are the baseline for survival.

DataOps 2.0 and the 612 Zettabyte Bottleneck

Unstructured data is projected to reach a staggering 612 zettabytes by 2030. Traditional databases simply aren't built to handle this. This has led to the rise of DataOps 2.0, where the focus is on "From Shovel to Model."

Radiant and other leaders are integrating the data lakehouse architecture directly into the AI stack. By focusing on RAG (Retrieval-Augmented Generation) stacks and context compression, these startups help enterprises manage massive datasets without the "context bloat" that typically slows down LLMs and drives up costs.

BUILDING INFRASTRUCTURE FOR AGENTIC AI SYSTEMS

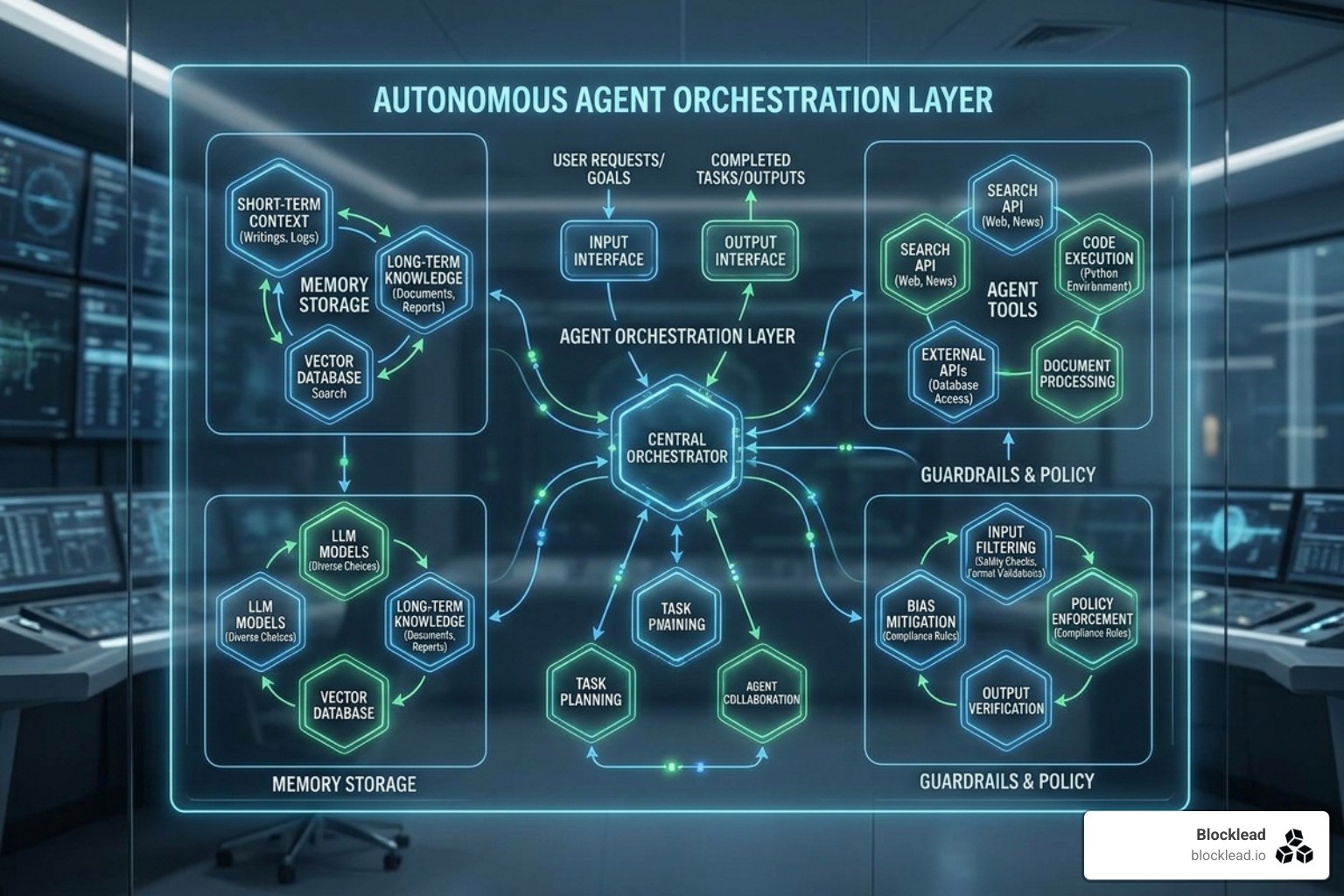

The next frontier isn't just a chatbot; it's an autonomous agent that can take actions. But giving an AI the keys to your API is terrifying. That's why we are seeing a massive surge in agentic infrastructure.

At Blocklead, we've seen how critical this layer is. As we discuss in our Inside the AI Venture Studio Playbook, building for agents requires a completely different mindset. You need secure sandboxes, digital twins for APIs, and runtime guards that can block an incorrect action before it happens.

Why Agentic AI Needs Specialized AI Infra Startups

Agents require "reasoning engines" rather than just simple inference. They need memory layers that persist across sessions and the ability to orchestrate multi-model workflows. This complexity is why the Rise of AI Venture Studios has become a popular model for co-funding these specialized startups.

Observability is also evolving. It's no longer just about "is the server up?" It's about "did the agent just hallucinate a legal contract?" Tracking these "reasoning traces" is a massive opportunity for new infra players.

Scaling Beyond the Sandbox

Moving an agent from a playground to production requires self-healing pipelines and SOC2 compliance. The Infrastructure Startups funded by Y Combinator 2026 are increasingly focused on these "boring but essential" features. Security isolation is paramount; if one agent is compromised, you cannot allow it to infect the rest of the network.

THE ENERGY FRONTIER: POWERING THE NEXT GIGASCALE FACTORY

You can't talk about AI infrastructure in 2026 without talking about power. Training a model like GPT-3 consumed 1,287 MWh of electricity—roughly the same as 120 US households use in a year. As we move toward larger models, the "energy wall" is the biggest threat to progress.

Crusoe has pioneered the "AI Factory" model, using renewable and stranded energy (like flared gas or excess wind power) to fuel data centers. Their 900 MW campuses are modular, meaning they can be deployed wherever power is cheapest and cleanest.

Sustainable AI Infra Startups and the 1287 MWh Problem

The 1287 MWh problem is driving a wave of innovation in cooling and battery tech. We are seeing 10 European AI Companies Challenging Silicon Valley by focusing on liquid cooling and iron-air batteries that can store renewable energy for days. Sustainable ai infra startups are proving that high-performance compute doesn't have to mean high-carbon footprints.

Vertical Integration: From Shovel to Model

The most successful companies are becoming vertically integrated. They don't just rent cloud space; they own the power grid synchronization and the physical land. This "capital selectivity" means that only those who can secure gigascale factories will survive the next decade.

Our research on How Co-Funding Accelerates AI Startup Success shows that investors are increasingly looking for teams that understand the physical constraints of the grid, not just the digital constraints of the code.

NAVIGATING THE AI INFRASTRUCTURE GOLD RUSH

The investment momentum is staggering. With $84 billion raised in mega-rounds in 2025, the "valuation ceiling" for ai infra startups continues to rise. But for founders in Latin America and Europe, the game is different. 10 Rising AI Startups in Latin America 2026 are often focusing on efficiency and "doing more with less," creating a unique seed-stage alpha for investors.

Evaluating AI Infra Startups for Enterprise Scale

For enterprises, choosing a provider is no longer just about the lowest price. It's about the cost-to-performance ratio, vendor neutrality, and reliability SLAs. AI Startups Europe often leads in compliance and data sovereignty, which is a major selling point for global corporations that need to navigate strict regulatory environments.

The Future Roadmap: SSMs, MoE, and Photonic Chips

The roadmap for the next few years is incredibly exciting. We are moving beyond the standard Transformer architecture toward State-Space Models (SSMs) like Mamba and Mixture-of-Experts (MoE) designs that are far more efficient.

Perhaps most is the rise of photonic chips. By using light instead of electricity to move data, these chips offer near-zero energy consumption for convolution operations. This could solve the data movement bottleneck once and for all.

FREQUENTLY ASKED QUESTIONS ABOUT AI INFRASTRUCTURE

What is the projected market size for AI infrastructure by 2031?

The AI infrastructure market is expected to reach over $309 billion by 2031, growing from just $23.5 billion in 2021. This growth is driven by the massive shift toward generative AI and the need for specialized compute and data layers.

How do AI infra startups solve the GPU waste problem?

Most GPUs in traditional clouds sit idle for significant periods. Modern ai infra startups use resource prediction, automated optimization, and "spot instance" orchestration to recover this idle capacity, often cutting compute costs by up to 80%.

What is the difference between an Inference Neocloud and a Hyperscaler?

A hyperscaler (like AWS or Google Cloud) provides a broad range of general-purpose services. An Inference Neocloud (like Evergrid or Nscale) is purpose-built for AI, offering single-tenant isolation, bare-metal performance, and pricing models optimized for token throughput rather than just raw server time.

CONCLUSION

Building the backbone of AI is the hardest challenge in tech today, but it's also the most rewarding. At Blocklead, we are dedicated to helping technical founders navigate this complexity. We co-found AI startups from zero, providing the capital, operators, and network needed to go from a "crazy idea" to a Series A powerhouse.

Whether you are building agentic systems, applied AI, or the next generation of developer tooling, we are here to be your co-founder from day zero. The infrastructure gold rush is just beginning, and we want to help you build the tools that will power the future.

For more information on how we can help you build your startup, check out our Blocklead services.