Guides

Date

Key Insight | Explanation |

|---|---|

AI product development is end-to-end | It spans discovery, data strategy, model building, deployment, and ongoing iteration — not just writing a model. |

Data quality is the primary failure point | Poor or incomplete training data is cited as the top reason AI projects fail to reach production. |

Agentic systems require different architecture | AI agents that take autonomous action need memory, tool access, and feedback loops that standard ML pipelines don't include. |

Speed to market has compressed dramatically | As of 2026, well-resourced teams are shipping initial AI products in 8-12 weeks using modern tooling and pre-trained foundation models. |

Practitioner involvement beats advisory-only | Teams with engineers who've shipped AI in production make faster, better architectural decisions than those relying on outside consultants. |

Human-centered design still matters | Optimizing purely for model performance while ignoring user experience is a documented cause of low adoption in AI products. |

Table of Contents

What Is AI Product Development?

How AI Product Development Works: The Full Lifecycle

Key Benefits of AI Product Development in 2026

Common Challenges and Costly Mistakes

Best Practices for AI Product Development in 2026

Sources & References

Frequently Asked Questions

Conclusion

Most technical founders have a working prototype before they have a product. That gap, from a demo that impresses in a meeting to a system that holds up under real user load, is exactly where AI product development lives. AI product development is the end-to-end process of designing, building, deploying, and iterating on products that use artificial intelligence as a core functional component. It covers everything from defining the problem worth solving to maintaining model performance after launch. Understanding this process isn't optional for founders building in this space — it's the difference between shipping something customers pay for and spending 18 months rebuilding the same prototype.

This guide covers the full AI product development lifecycle, the most common failure modes (with data to back them up), and the practical frameworks that production teams actually use in 2026.

What Is AI Product Development?

AI product development is the structured process of building products where artificial intelligence drives core functionality — from research and ideation through deployment and continuous improvement. It differs from traditional software development because the behavior of an AI system emerges from data and training, not just code logic.

A Clear Definition Worth Extracting

According to Future Processing, AI product development is "the end-to-end process of designing, building, launching, and scaling products that have artificial intelligence at their core." [1] That's a useful starting point. In practice, it means the development team must manage not just software engineering concerns but also data pipelines, model selection, evaluation frameworks, and feedback loops that keep the system improving post-launch.

Three distinct product types fall under this umbrella:

Applied AI products: Software that uses pre-trained foundation models (large language models, vision models) to deliver a specific user-facing function — think AI writing assistants, document analysis tools, or customer support agents.

Agentic systems: Products where AI agents (autonomous software entities that perceive inputs, reason, and take actions) operate with minimal human intervention to complete multi-step tasks.

AI infrastructure: Developer tools, APIs, and platforms that other teams use to build AI products — model serving infrastructure, vector databases, evaluation frameworks, and observability tooling.

Why the Definition Matters for Founders

The category you're building in determines your architecture, your data strategy, and your go-to-market timeline. A team building an applied AI product on top of an existing foundation model can ship in weeks. A team building proprietary AI infrastructure from scratch is looking at a fundamentally different investment of time and capital.

IBM's research on AI in product development notes that the term covers a wide range of capabilities, from predictive analytics to generative AI to autonomous decision-making systems. [2] Each requires a different skill set and a different production architecture. Getting clear on which one you're building is the first real decision in the process.

Pro Tip: Before writing a single line of model code, write a one-paragraph description of what the AI component does that a non-technical customer could read and immediately understand. If you can't write that paragraph, you haven't defined the product yet.

How AI Product Development Works: The Full Lifecycle

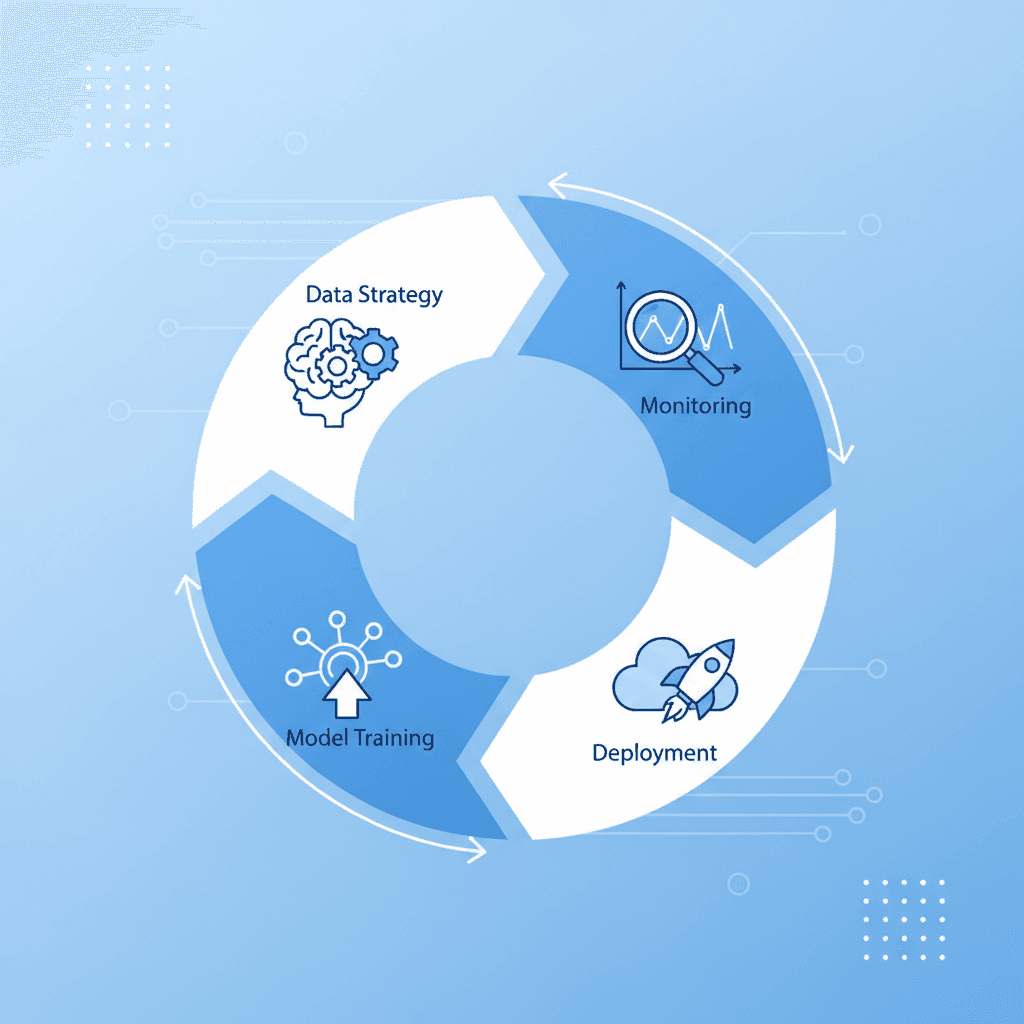

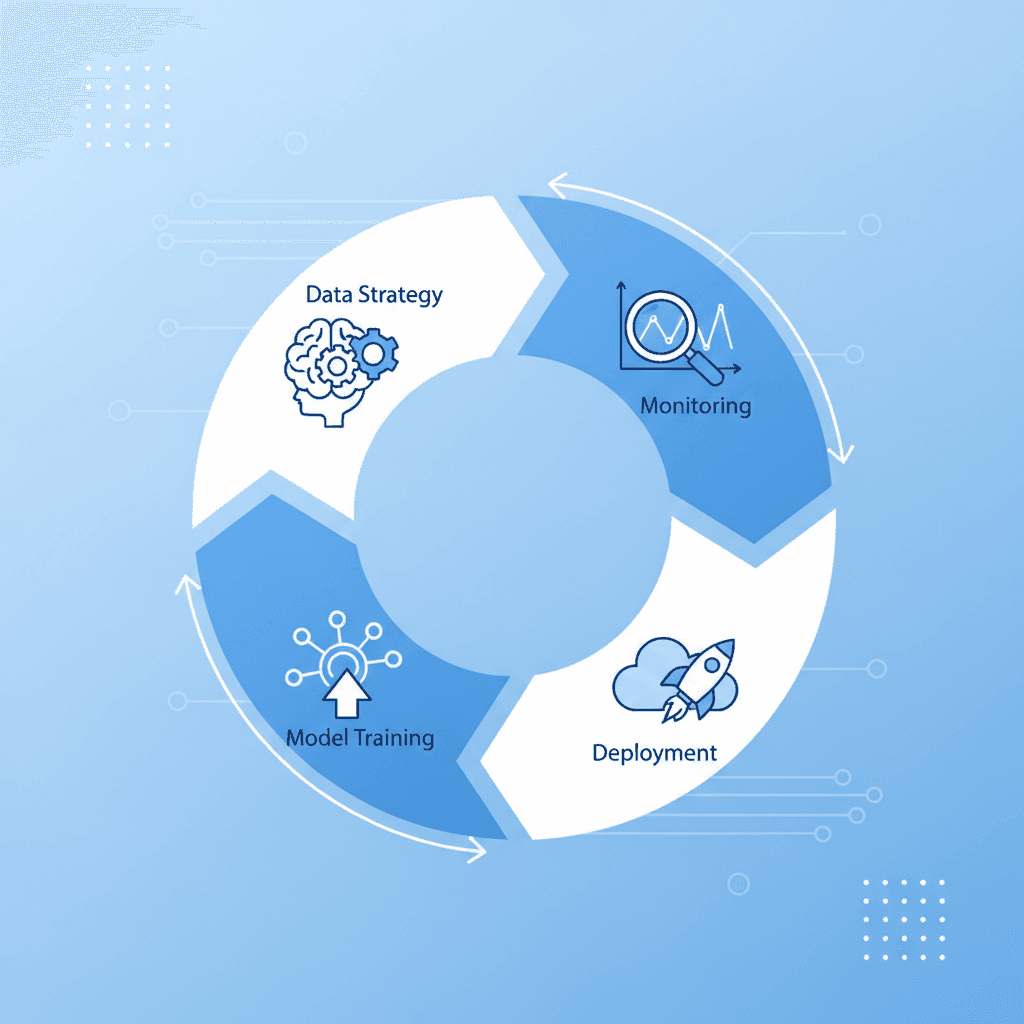

AI product development follows a lifecycle that's more iterative than traditional software development, with explicit stages for data, model evaluation, and production monitoring that have no direct equivalent in conventional engineering.

The Core Stages

The Scaled Agile Framework (SAFe), widely adopted for enterprise AI delivery, describes the AI product lifecycle as a continuous loop rather than a linear sequence. [3] Here's how production teams structure it:

Problem definition and scoping: Identify the specific decision or task the AI will handle. Define success metrics before touching data. A common mistake is scoping too broadly — "improve customer experience with AI" is not a product definition.

Data discovery and strategy: Audit existing data sources, identify gaps, and design collection or labeling pipelines. This stage often takes longer than founders expect. Data quality directly determines model ceiling.

Model selection and architecture: Decide whether to fine-tune an existing foundation model, build a retrieval-augmented generation (RAG) pipeline, train a custom model, or orchestrate multiple models. Each choice has different cost, latency, and maintenance implications.

Prototype and evaluation: Build a minimum testable system and evaluate it against the success metrics defined in stage one. Use held-out test sets and, where possible, real user feedback.

Production deployment: Move from notebook or staging environment to production infrastructure. This includes model serving, monitoring, alerting, and fallback logic.

Iteration and continuous improvement: Collect production feedback, monitor for model drift (degradation in model performance over time due to changing input distributions), and retrain or update the model on a regular cadence.

Where Agentic Systems Change the Equation

Building agentic systems, AI agents that autonomously plan and execute multi-step tasks, adds several layers of complexity. These systems need tool integrations, memory management, and guardrails that prevent agents from taking unintended actions. Kearney's 2026 analysis highlights that AI agents are increasingly supporting specific decisions across the full product lifecycle, providing "deeper, more accurate, and more timely insights" than static models. [4] That capability comes with real engineering overhead that teams need to plan for explicitly.

Key Benefits of AI Product Development in 2026

this method, done well, compresses time-to-market, reduces manual workload, and creates products that improve with use — a compounding advantage that traditional software can't replicate.

Speed and Efficiency Gains

Research from Harvard Business Review (2026) indicates that AI has the potential to unlock improvements across every stage of the product development process, with teams reporting significant reductions in time spent on research, prototyping, and testing cycles. [5] In practice, this shows up in several measurable ways:

Faster ideation: Generative AI tools can synthesize market research, user interview transcripts, and competitor analysis in hours rather than weeks.

Accelerated prototyping: AI-assisted code generation and no-code AI tooling allow teams to build functional prototypes in days, not sprints.

Automated testing: AI-powered test generation and quality assurance tools catch regressions faster than manual QA cycles.

Continuous optimization: Models that learn from production usage improve over time without requiring full retraining cycles.

Competitive and Strategic Advantages

The strategic case for investing in this strategy goes beyond operational efficiency. Products with AI at their core can build defensible moats through proprietary data flywheels, where each user interaction generates training signal that makes the product better, which attracts more users, which generates more data.

Benefit Area | Traditional Software | AI-Powered Product |

|---|---|---|

Improves with use | Requires manual updates | Models retrain on production data |

Personalization | Rule-based, limited | Adaptive to individual user behavior |

Handling unstructured data | Requires structured inputs | Processes text, images, audio natively |

Competitive moat | Feature parity is achievable quickly | Proprietary data flywheel is hard to replicate |

Automation ceiling | Limited to deterministic tasks | Can automate judgment-heavy workflows |

According to MIT Sloan Management Review, innovation teams are using generative AI to enhance ideation and creativity, gain market and customer insights, and add user-friendly interfaces to existing systems — all areas where the compounding advantage of AI is most visible. [6]

Pro Tip: Map your data flywheel before your first customer conversation. Ask: "What data does each user interaction generate, and how does that data make the product better for the next user?" If you can't answer that question, your AI product may not have a defensible moat.

Common Challenges and Costly Mistakes

this approach fails more often than it succeeds, and the failure patterns are consistent enough that they're predictable — and avoidable with the right preparation.

The Data Problem Is Always Bigger Than You Think

Poor data quality is the most documented cause of AI project failure. Teams consistently underestimate the time required to clean, label, and structure training data. A team we've worked with at Blocklead spent three months building a classification model before discovering that 30% of their training labels were inconsistent — the model had learned the noise, not the signal.

The failure modes around data include:

Distribution mismatch: Training data that doesn't reflect the real-world inputs the model will encounter in production.

Label inconsistency: Human annotators who disagree on edge cases, creating noisy training signal.

Data leakage: Information from the test set inadvertently included in training, producing inflated evaluation metrics that don't hold in production.

Outdated data: Training on historical data that no longer reflects current user behavior or market conditions.

Architectural and Organizational Mistakes

Beyond data, teams make predictable architectural and process mistakes that compound over time:

Skipping evaluation design: Building a model without defining how success will be measured leads to endless iteration with no clear stopping point.

Over-engineering the first version: Spending months building custom model architecture when a fine-tuned foundation model would have shipped faster and performed comparably.

Ignoring production monitoring: Deploying a model without alerting for performance degradation. Model drift (the gradual decline in model accuracy as input distributions shift) is silent without instrumentation.

Treating AI as a feature, not a product: Bolting AI onto an existing product without redesigning the user experience around AI-native interaction patterns.

The Lean Institute raises a related concern: teams optimizing purely for model performance metrics while overlooking the human and experiential elements of the product. [7] In practice, this shows up as products that score well on benchmarks but have low user adoption because the interaction design doesn't match how people actually work.

Pro Tip: Run a "pre-mortem" before you start building. Gather your team and ask: "It's 12 months from now and this product failed. What went wrong?" The answers almost always point to data quality, scope creep, or a mismatch between the AI's capabilities and what customers actually needed.

Best Practices for AI Product Development in 2026

The teams shipping successful AI products in 2026 share a set of practices that separate them from teams stuck in endless prototype cycles. These aren't theoretical — they're drawn from production experience.

Framework: The AI Product Development Stack

Delve's research on AI in product development identifies 19 distinct areas where AI creates real value across the product development process, from idea framing through insight generation to launch. [8] The teams that capture the most value don't try to apply AI everywhere at once. They pick the highest-leverage point in their workflow and instrument it thoroughly before expanding.

At Blocklead, we've found that the most effective early-stage AI teams structure their work around three layers:

The data layer: Pipelines for ingestion, cleaning, labeling, and versioning. This is infrastructure, not a one-time task.

The model layer: Selection, fine-tuning or prompting, evaluation, and versioning. Treat models like code — version them, test them, and have rollback plans.

The application layer: The user-facing product, including UX design, API integration, and the feedback mechanisms that route user signals back into the data layer.

Actionable Best Practices

Aubergine's practical guide to AI-driven product development emphasizes that the fastest teams treat this as a continuous process, not a project with a defined end date. [9] The following practices reflect that orientation:

Define your evaluation framework before you start building. Decide what "good" looks like in measurable terms. Use a combination of automated metrics and human evaluation.

Start with the smallest model that could work. Fine-tuned smaller models often outperform large general-purpose models on narrow tasks, at a fraction of the inference cost.

Build your feedback loop into the product from day one. Every user interaction should generate a signal. Design explicit thumbs-up/thumbs-down mechanisms, implicit signals from user behavior, or both.

Instrument production before launch. Set up logging, monitoring, and alerting for model performance, latency, and error rates before the first user touches the product.

Iterate on data before iterating on models. When performance is below target, the answer is usually better data, not a bigger model.

Separate your AI components from your application logic. This makes it possible to swap models, update prompts, or change providers without rewriting the application layer.

Rishabh Software's analysis highlights that teams adopting these structured approaches see measurable reductions in time-to-market and post-launch defect rates compared to ad hoc AI integration. [10]

Sources & References

Future Processing, "AI product development: from idea to measurable business impact," 2026

Scaled Agile, "Implementing AI in Product Development," 2026

Kearney, "AI is revolutionizing product development: from concept to market in record time," 2026

Harvard Business Review, "Reimagining Product Development with AI," 2026

MIT Sloan Management Review, "When Generative AI Meets Product Development," 2026

Lean Institute, "AI in Product Development: Are We Gaining Speed but Losing Soul?" 2026

Delve, "In What Ways Can AI Be Used For Product Development?" 2026

Aubergine, "AI Driven Product Development: A Practical Guide," 2026

Siemens, "AI in product development: How to harness intelligent product design," 2026

Forbes, "How AI Is Revolutionizing New Product Development," 2024

Frequently Asked Questions

1. Why do 85% of AI projects fail?

The 85% figure (originally from Gartner) points to poor data quality as the primary cause, but the full picture is more nuanced. AI projects fail for a cluster of interconnected reasons: training data that doesn't reflect production inputs, evaluation frameworks defined too late or not at all, scope that expands beyond what the model can reliably handle, and a lack of production monitoring that lets model drift go undetected for months. Teams that address data strategy and evaluation design before writing model code fail at significantly lower rates. The fix isn't better models — it's better process.

2. What is the AI product development lifecycle?

The it lifecycle is a continuous loop covering six core stages: problem definition and scoping, data discovery and strategy, model selection and architecture, prototype and evaluation, production deployment, and iteration through continuous improvement. Unlike traditional software development, the lifecycle doesn't end at launch. Model drift, changing user behavior, and new data sources mean that AI products require ongoing maintenance and retraining to stay performant. Teams that treat deployment as the finish line typically see performance degrade within 6-12 months.

3. What tools are used in AI product development?

As of 2026, the core toolset for this method spans several categories. For model development, teams use frameworks like PyTorch and JAX alongside hosted APIs from major foundation model providers. For data management, tools like dbt, Great Expectations, and vector databases (Pinecone, Weaviate, pgvector) handle pipeline and retrieval needs. For evaluation and observability, platforms like LangSmith, Weights & Biases, and Arize AI provide the monitoring infrastructure that production AI systems require. For agentic systems specifically, orchestration frameworks like LangGraph and CrewAI have become standard. The right stack depends on your product category and scale.

4. How long does AI product development take?

Timeline depends heavily on the product type and team experience. Applied AI products built on top of existing foundation models can reach a testable prototype in 4-8 weeks and a production-ready v1 in 10-16 weeks with a focused team. Custom model development or proprietary AI infrastructure takes significantly longer — typically 6-18 months to first production deployment. The most common timeline mistake is underestimating data preparation. In practice, data work often consumes 40-60% of total development time on the first version of an AI product.

5. What is the difference between applied AI and agentic systems in product development?

Applied AI products use AI models to perform a specific, bounded task within a product — generating text, classifying inputs, or making a recommendation. The AI component is reactive: it responds to a prompt or input and returns an output. Agentic systems go further. An AI agent perceives its environment, plans a sequence of actions, uses tools (APIs, databases, code execution), and executes autonomously over multiple steps to complete a goal. Agentic systems require memory management, tool integration, and safety guardrails that applied AI products don't need. The engineering complexity is meaningfully higher, and so is the potential value delivered to users.

6. Do I need a large dataset to start AI product development?

Not necessarily. The rise of foundation models and fine-tuning techniques like LoRA (Low-Rank Adaptation) means that many AI products can be built with relatively small, high-quality datasets — sometimes as few as a few hundred labeled examples for a narrow task. What matters more than volume is quality and relevance. A dataset of 500 carefully labeled examples that reflects real production inputs will outperform 50,000 noisy examples scraped without curation. For retrieval-augmented generation (RAG) architectures, you don't need training data at all — you need a well-structured knowledge base and a solid retrieval pipeline.

Conclusion

this strategy is not a single skill or a single decision. It's a discipline that spans data engineering, model architecture, user experience design, and production operations. The teams that ship successfully treat each of these layers as a first-class concern from day one, not something to address after the model is "done."

The patterns are consistent. Teams that define success metrics before building, invest in data quality before model selection, and instrument production before launch ship faster and iterate more effectively than teams that skip those steps. Results will vary based on team experience, domain complexity, and data availability — but the underlying process is learnable and repeatable.

At Blocklead, we co-found AI companies with technical founders from day zero, with senior practitioners who write production code across agentic systems, applied AI, and AI infrastructure. If you're at the stage where you have a working prototype and need the operational depth to turn it into a product customers pay for, that's exactly the gap we work in. Our team brings global presence across four offices on three continents, with time-zone-aligned support and honest feedback grounded in what actually ships.

this approach is hard work. The good news is that the failure modes are well-documented and the best practices are proven. You don't have to learn them all from scratch.

About the Author

Written by the AI Venture Studio / Venture Capital experts at Blocklead. Our team brings years of hands-on experience helping businesses with AI Venture Studio / Venture Capital, delivering practical guidance grounded in real-world results.

Recommended Articles

Explore more from our content library:

Guides

Date

Key Insight | Explanation |

|---|---|

AI product development is end-to-end | It spans discovery, data strategy, model building, deployment, and ongoing iteration — not just writing a model. |

Data quality is the primary failure point | Poor or incomplete training data is cited as the top reason AI projects fail to reach production. |

Agentic systems require different architecture | AI agents that take autonomous action need memory, tool access, and feedback loops that standard ML pipelines don't include. |

Speed to market has compressed dramatically | As of 2026, well-resourced teams are shipping initial AI products in 8-12 weeks using modern tooling and pre-trained foundation models. |

Practitioner involvement beats advisory-only | Teams with engineers who've shipped AI in production make faster, better architectural decisions than those relying on outside consultants. |

Human-centered design still matters | Optimizing purely for model performance while ignoring user experience is a documented cause of low adoption in AI products. |

Table of Contents

What Is AI Product Development?

How AI Product Development Works: The Full Lifecycle

Key Benefits of AI Product Development in 2026

Common Challenges and Costly Mistakes

Best Practices for AI Product Development in 2026

Sources & References

Frequently Asked Questions

Conclusion

Most technical founders have a working prototype before they have a product. That gap, from a demo that impresses in a meeting to a system that holds up under real user load, is exactly where AI product development lives. AI product development is the end-to-end process of designing, building, deploying, and iterating on products that use artificial intelligence as a core functional component. It covers everything from defining the problem worth solving to maintaining model performance after launch. Understanding this process isn't optional for founders building in this space — it's the difference between shipping something customers pay for and spending 18 months rebuilding the same prototype.

This guide covers the full AI product development lifecycle, the most common failure modes (with data to back them up), and the practical frameworks that production teams actually use in 2026.

What Is AI Product Development?

AI product development is the structured process of building products where artificial intelligence drives core functionality — from research and ideation through deployment and continuous improvement. It differs from traditional software development because the behavior of an AI system emerges from data and training, not just code logic.

A Clear Definition Worth Extracting

According to Future Processing, AI product development is "the end-to-end process of designing, building, launching, and scaling products that have artificial intelligence at their core." [1] That's a useful starting point. In practice, it means the development team must manage not just software engineering concerns but also data pipelines, model selection, evaluation frameworks, and feedback loops that keep the system improving post-launch.

Three distinct product types fall under this umbrella:

Applied AI products: Software that uses pre-trained foundation models (large language models, vision models) to deliver a specific user-facing function — think AI writing assistants, document analysis tools, or customer support agents.

Agentic systems: Products where AI agents (autonomous software entities that perceive inputs, reason, and take actions) operate with minimal human intervention to complete multi-step tasks.

AI infrastructure: Developer tools, APIs, and platforms that other teams use to build AI products — model serving infrastructure, vector databases, evaluation frameworks, and observability tooling.

Why the Definition Matters for Founders

The category you're building in determines your architecture, your data strategy, and your go-to-market timeline. A team building an applied AI product on top of an existing foundation model can ship in weeks. A team building proprietary AI infrastructure from scratch is looking at a fundamentally different investment of time and capital.

IBM's research on AI in product development notes that the term covers a wide range of capabilities, from predictive analytics to generative AI to autonomous decision-making systems. [2] Each requires a different skill set and a different production architecture. Getting clear on which one you're building is the first real decision in the process.

Pro Tip: Before writing a single line of model code, write a one-paragraph description of what the AI component does that a non-technical customer could read and immediately understand. If you can't write that paragraph, you haven't defined the product yet.

How AI Product Development Works: The Full Lifecycle

AI product development follows a lifecycle that's more iterative than traditional software development, with explicit stages for data, model evaluation, and production monitoring that have no direct equivalent in conventional engineering.

The Core Stages

The Scaled Agile Framework (SAFe), widely adopted for enterprise AI delivery, describes the AI product lifecycle as a continuous loop rather than a linear sequence. [3] Here's how production teams structure it:

Problem definition and scoping: Identify the specific decision or task the AI will handle. Define success metrics before touching data. A common mistake is scoping too broadly — "improve customer experience with AI" is not a product definition.

Data discovery and strategy: Audit existing data sources, identify gaps, and design collection or labeling pipelines. This stage often takes longer than founders expect. Data quality directly determines model ceiling.

Model selection and architecture: Decide whether to fine-tune an existing foundation model, build a retrieval-augmented generation (RAG) pipeline, train a custom model, or orchestrate multiple models. Each choice has different cost, latency, and maintenance implications.

Prototype and evaluation: Build a minimum testable system and evaluate it against the success metrics defined in stage one. Use held-out test sets and, where possible, real user feedback.

Production deployment: Move from notebook or staging environment to production infrastructure. This includes model serving, monitoring, alerting, and fallback logic.

Iteration and continuous improvement: Collect production feedback, monitor for model drift (degradation in model performance over time due to changing input distributions), and retrain or update the model on a regular cadence.

Where Agentic Systems Change the Equation

Building agentic systems, AI agents that autonomously plan and execute multi-step tasks, adds several layers of complexity. These systems need tool integrations, memory management, and guardrails that prevent agents from taking unintended actions. Kearney's 2026 analysis highlights that AI agents are increasingly supporting specific decisions across the full product lifecycle, providing "deeper, more accurate, and more timely insights" than static models. [4] That capability comes with real engineering overhead that teams need to plan for explicitly.

Key Benefits of AI Product Development in 2026

this method, done well, compresses time-to-market, reduces manual workload, and creates products that improve with use — a compounding advantage that traditional software can't replicate.

Speed and Efficiency Gains

Research from Harvard Business Review (2026) indicates that AI has the potential to unlock improvements across every stage of the product development process, with teams reporting significant reductions in time spent on research, prototyping, and testing cycles. [5] In practice, this shows up in several measurable ways:

Faster ideation: Generative AI tools can synthesize market research, user interview transcripts, and competitor analysis in hours rather than weeks.

Accelerated prototyping: AI-assisted code generation and no-code AI tooling allow teams to build functional prototypes in days, not sprints.

Automated testing: AI-powered test generation and quality assurance tools catch regressions faster than manual QA cycles.

Continuous optimization: Models that learn from production usage improve over time without requiring full retraining cycles.

Competitive and Strategic Advantages

The strategic case for investing in this strategy goes beyond operational efficiency. Products with AI at their core can build defensible moats through proprietary data flywheels, where each user interaction generates training signal that makes the product better, which attracts more users, which generates more data.

Benefit Area | Traditional Software | AI-Powered Product |

|---|---|---|

Improves with use | Requires manual updates | Models retrain on production data |

Personalization | Rule-based, limited | Adaptive to individual user behavior |

Handling unstructured data | Requires structured inputs | Processes text, images, audio natively |

Competitive moat | Feature parity is achievable quickly | Proprietary data flywheel is hard to replicate |

Automation ceiling | Limited to deterministic tasks | Can automate judgment-heavy workflows |

According to MIT Sloan Management Review, innovation teams are using generative AI to enhance ideation and creativity, gain market and customer insights, and add user-friendly interfaces to existing systems — all areas where the compounding advantage of AI is most visible. [6]

Pro Tip: Map your data flywheel before your first customer conversation. Ask: "What data does each user interaction generate, and how does that data make the product better for the next user?" If you can't answer that question, your AI product may not have a defensible moat.

Common Challenges and Costly Mistakes

this approach fails more often than it succeeds, and the failure patterns are consistent enough that they're predictable — and avoidable with the right preparation.

The Data Problem Is Always Bigger Than You Think

Poor data quality is the most documented cause of AI project failure. Teams consistently underestimate the time required to clean, label, and structure training data. A team we've worked with at Blocklead spent three months building a classification model before discovering that 30% of their training labels were inconsistent — the model had learned the noise, not the signal.

The failure modes around data include:

Distribution mismatch: Training data that doesn't reflect the real-world inputs the model will encounter in production.

Label inconsistency: Human annotators who disagree on edge cases, creating noisy training signal.

Data leakage: Information from the test set inadvertently included in training, producing inflated evaluation metrics that don't hold in production.

Outdated data: Training on historical data that no longer reflects current user behavior or market conditions.

Architectural and Organizational Mistakes

Beyond data, teams make predictable architectural and process mistakes that compound over time:

Skipping evaluation design: Building a model without defining how success will be measured leads to endless iteration with no clear stopping point.

Over-engineering the first version: Spending months building custom model architecture when a fine-tuned foundation model would have shipped faster and performed comparably.

Ignoring production monitoring: Deploying a model without alerting for performance degradation. Model drift (the gradual decline in model accuracy as input distributions shift) is silent without instrumentation.

Treating AI as a feature, not a product: Bolting AI onto an existing product without redesigning the user experience around AI-native interaction patterns.

The Lean Institute raises a related concern: teams optimizing purely for model performance metrics while overlooking the human and experiential elements of the product. [7] In practice, this shows up as products that score well on benchmarks but have low user adoption because the interaction design doesn't match how people actually work.

Pro Tip: Run a "pre-mortem" before you start building. Gather your team and ask: "It's 12 months from now and this product failed. What went wrong?" The answers almost always point to data quality, scope creep, or a mismatch between the AI's capabilities and what customers actually needed.

Best Practices for AI Product Development in 2026

The teams shipping successful AI products in 2026 share a set of practices that separate them from teams stuck in endless prototype cycles. These aren't theoretical — they're drawn from production experience.

Framework: The AI Product Development Stack

Delve's research on AI in product development identifies 19 distinct areas where AI creates real value across the product development process, from idea framing through insight generation to launch. [8] The teams that capture the most value don't try to apply AI everywhere at once. They pick the highest-leverage point in their workflow and instrument it thoroughly before expanding.

At Blocklead, we've found that the most effective early-stage AI teams structure their work around three layers:

The data layer: Pipelines for ingestion, cleaning, labeling, and versioning. This is infrastructure, not a one-time task.

The model layer: Selection, fine-tuning or prompting, evaluation, and versioning. Treat models like code — version them, test them, and have rollback plans.

The application layer: The user-facing product, including UX design, API integration, and the feedback mechanisms that route user signals back into the data layer.

Actionable Best Practices

Aubergine's practical guide to AI-driven product development emphasizes that the fastest teams treat this as a continuous process, not a project with a defined end date. [9] The following practices reflect that orientation:

Define your evaluation framework before you start building. Decide what "good" looks like in measurable terms. Use a combination of automated metrics and human evaluation.

Start with the smallest model that could work. Fine-tuned smaller models often outperform large general-purpose models on narrow tasks, at a fraction of the inference cost.

Build your feedback loop into the product from day one. Every user interaction should generate a signal. Design explicit thumbs-up/thumbs-down mechanisms, implicit signals from user behavior, or both.

Instrument production before launch. Set up logging, monitoring, and alerting for model performance, latency, and error rates before the first user touches the product.

Iterate on data before iterating on models. When performance is below target, the answer is usually better data, not a bigger model.

Separate your AI components from your application logic. This makes it possible to swap models, update prompts, or change providers without rewriting the application layer.

Rishabh Software's analysis highlights that teams adopting these structured approaches see measurable reductions in time-to-market and post-launch defect rates compared to ad hoc AI integration. [10]

Sources & References

Future Processing, "AI product development: from idea to measurable business impact," 2026

Scaled Agile, "Implementing AI in Product Development," 2026

Kearney, "AI is revolutionizing product development: from concept to market in record time," 2026

Harvard Business Review, "Reimagining Product Development with AI," 2026

MIT Sloan Management Review, "When Generative AI Meets Product Development," 2026

Lean Institute, "AI in Product Development: Are We Gaining Speed but Losing Soul?" 2026

Delve, "In What Ways Can AI Be Used For Product Development?" 2026

Aubergine, "AI Driven Product Development: A Practical Guide," 2026

Siemens, "AI in product development: How to harness intelligent product design," 2026

Forbes, "How AI Is Revolutionizing New Product Development," 2024

Frequently Asked Questions

1. Why do 85% of AI projects fail?

The 85% figure (originally from Gartner) points to poor data quality as the primary cause, but the full picture is more nuanced. AI projects fail for a cluster of interconnected reasons: training data that doesn't reflect production inputs, evaluation frameworks defined too late or not at all, scope that expands beyond what the model can reliably handle, and a lack of production monitoring that lets model drift go undetected for months. Teams that address data strategy and evaluation design before writing model code fail at significantly lower rates. The fix isn't better models — it's better process.

2. What is the AI product development lifecycle?

The it lifecycle is a continuous loop covering six core stages: problem definition and scoping, data discovery and strategy, model selection and architecture, prototype and evaluation, production deployment, and iteration through continuous improvement. Unlike traditional software development, the lifecycle doesn't end at launch. Model drift, changing user behavior, and new data sources mean that AI products require ongoing maintenance and retraining to stay performant. Teams that treat deployment as the finish line typically see performance degrade within 6-12 months.

3. What tools are used in AI product development?

As of 2026, the core toolset for this method spans several categories. For model development, teams use frameworks like PyTorch and JAX alongside hosted APIs from major foundation model providers. For data management, tools like dbt, Great Expectations, and vector databases (Pinecone, Weaviate, pgvector) handle pipeline and retrieval needs. For evaluation and observability, platforms like LangSmith, Weights & Biases, and Arize AI provide the monitoring infrastructure that production AI systems require. For agentic systems specifically, orchestration frameworks like LangGraph and CrewAI have become standard. The right stack depends on your product category and scale.

4. How long does AI product development take?

Timeline depends heavily on the product type and team experience. Applied AI products built on top of existing foundation models can reach a testable prototype in 4-8 weeks and a production-ready v1 in 10-16 weeks with a focused team. Custom model development or proprietary AI infrastructure takes significantly longer — typically 6-18 months to first production deployment. The most common timeline mistake is underestimating data preparation. In practice, data work often consumes 40-60% of total development time on the first version of an AI product.

5. What is the difference between applied AI and agentic systems in product development?

Applied AI products use AI models to perform a specific, bounded task within a product — generating text, classifying inputs, or making a recommendation. The AI component is reactive: it responds to a prompt or input and returns an output. Agentic systems go further. An AI agent perceives its environment, plans a sequence of actions, uses tools (APIs, databases, code execution), and executes autonomously over multiple steps to complete a goal. Agentic systems require memory management, tool integration, and safety guardrails that applied AI products don't need. The engineering complexity is meaningfully higher, and so is the potential value delivered to users.

6. Do I need a large dataset to start AI product development?

Not necessarily. The rise of foundation models and fine-tuning techniques like LoRA (Low-Rank Adaptation) means that many AI products can be built with relatively small, high-quality datasets — sometimes as few as a few hundred labeled examples for a narrow task. What matters more than volume is quality and relevance. A dataset of 500 carefully labeled examples that reflects real production inputs will outperform 50,000 noisy examples scraped without curation. For retrieval-augmented generation (RAG) architectures, you don't need training data at all — you need a well-structured knowledge base and a solid retrieval pipeline.

Conclusion

this strategy is not a single skill or a single decision. It's a discipline that spans data engineering, model architecture, user experience design, and production operations. The teams that ship successfully treat each of these layers as a first-class concern from day one, not something to address after the model is "done."

The patterns are consistent. Teams that define success metrics before building, invest in data quality before model selection, and instrument production before launch ship faster and iterate more effectively than teams that skip those steps. Results will vary based on team experience, domain complexity, and data availability — but the underlying process is learnable and repeatable.

At Blocklead, we co-found AI companies with technical founders from day zero, with senior practitioners who write production code across agentic systems, applied AI, and AI infrastructure. If you're at the stage where you have a working prototype and need the operational depth to turn it into a product customers pay for, that's exactly the gap we work in. Our team brings global presence across four offices on three continents, with time-zone-aligned support and honest feedback grounded in what actually ships.

this approach is hard work. The good news is that the failure modes are well-documented and the best practices are proven. You don't have to learn them all from scratch.

About the Author

Written by the AI Venture Studio / Venture Capital experts at Blocklead. Our team brings years of hands-on experience helping businesses with AI Venture Studio / Venture Capital, delivering practical guidance grounded in real-world results.

Recommended Articles

Explore more from our content library: